One of the difficulties with using AWS Lambda is that because you don’t provision any virtual machines, and the unit of deployment is basically a zip package containing your jar files (or class files in Python/Javascript land), then there’s no easy way to provide a configuration file alongside your lambda during deployment. I’ve now updated the sample Lambda to pull in configuration from an environment variable, which is dead simple.

I’m leaving it here for posterity, but if you want to configure your AWS Lambda then using environment variables is the best way to do it. UpdateĪWS Lambda can now be configured using Environment Variables which makes the majority of this post obsolete.

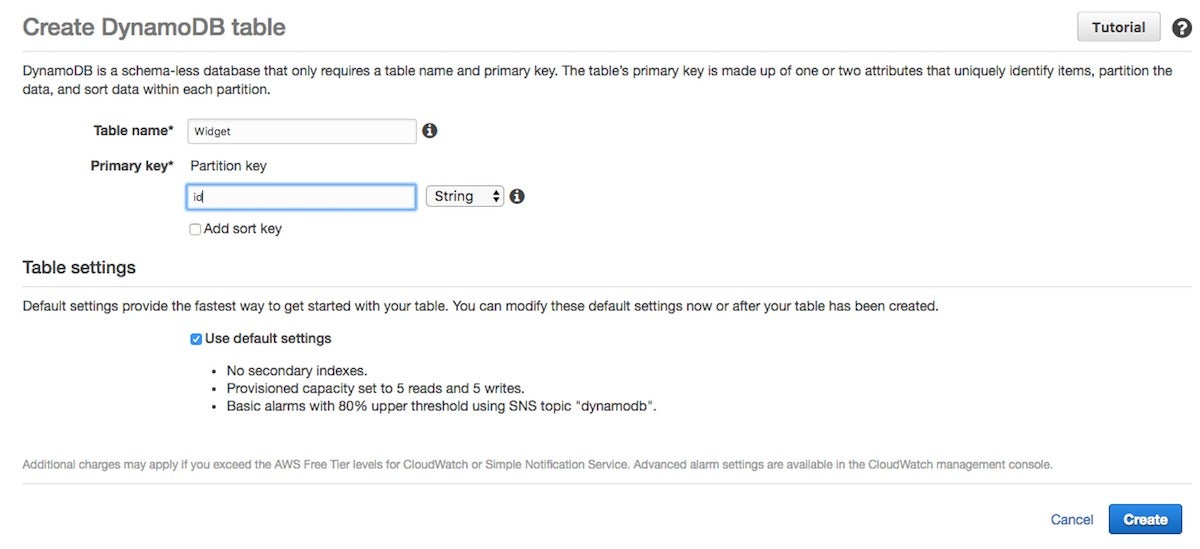

AWS DYNAMODB CLIENT CONFIGURATION CODE

The code for the helloWorldFunction lambda is available on Github, which uses an API Gateway to expose access to the lambda using a path parameter as the input request object, pulls in DynamoDB config to set part of the response, and returns a mustache-templated HTML response to the user. Using one of the innovation days that Kainos kindly provides me each month, I’ve been playing about with AWS Lambda because I’ve heard an increase in hype from recent conferences and also because we’re starting to look at this way of developing application services on some of our projects. This ‘serverless’ approach means you pay only for data transfer and the brief time your function is actually executing, which is attractive for cost saving reasons as well as making it easy to compose functions together and scale in an event-driven system (More queue items? Just invoke more lambdas to process them). AWS Lambda will provision some ephemeral compute resources, run your lambda function, and then throw away the underlying nodes afterwards.

You can write a request handling class which will be invoked by a predetermined event such as an external API call, in response to a queue item being created, or on a schedule ( amongst others). AWS Lambda provides an interesting, very highly scalable platform for running functions as a service.